Machine learning engineering involves complex, multi-step workflows: understanding a problem, selecting appropriate models, writing training and inference code, debugging, hyperparameter tuning, and evaluating results. These tasks are time-consuming even for experienced practitioners and often require iterative trial-and-error across many design choices.

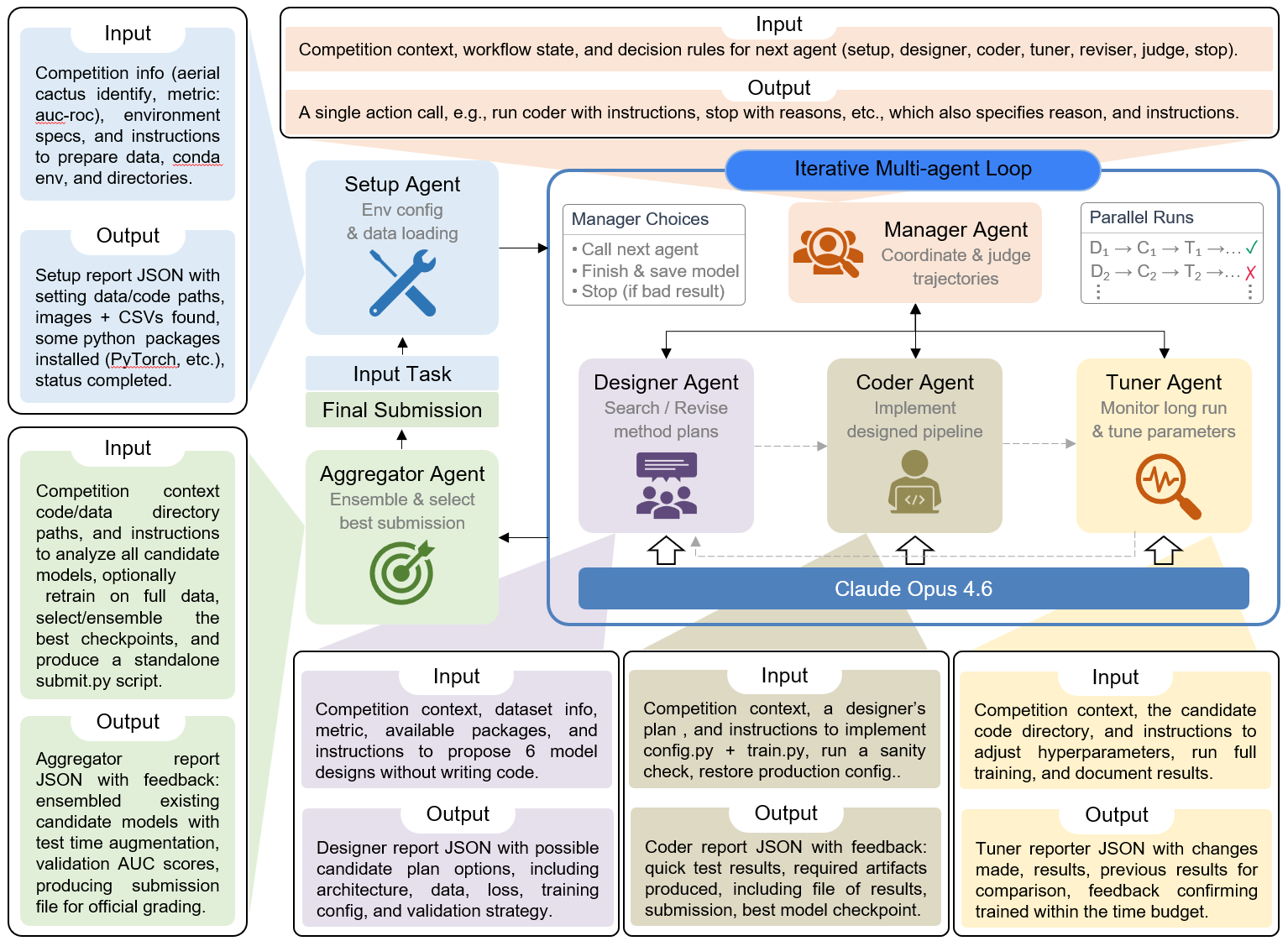

We introduce AIBuildAI, a framework for autonomous machine learning engineering powered by an iterative multi-agent loop. AIBuildAI decomposes the entire ML pipeline into specialized roles — setup, management, design, coding, tuning, and aggregation — each handled by a dedicated AI agent built on top of Claude Opus 4.6. A central Manager Agent orchestrates the workflow, iteratively dispatching tasks to specialized agents until the best result is achieved.